The Pin Factory Was Always the Point

A Room Full of Half-Jobs

In the late 1700s, Adam Smith visited a pin factory. A few workers, some wire, and a repetitive process turning out something as trivial as pins.

What caught his attention was the way the work had been divided.

No one in the room was actually making a pin.

One man pulled wire. Another straightened it. Another cut it. Someone else sharpened the ends, someone formed the heads, another attached them, and others finished and packed. Smith noted that a man working alone might make twenty pins in a day, maybe not even one. Together, those ten workers made forty-eight thousand.

Before factories like this, an entire village in some countries might have only had a single pin. Not because pins were mysterious or the materials were scarce, but because making one from scratch, end to end, was genuinely hard. The new factory did not invent new skills. It just stopped asking one person to hold all of them at once.

The real advantage was that no one had to carry the entire process at once.

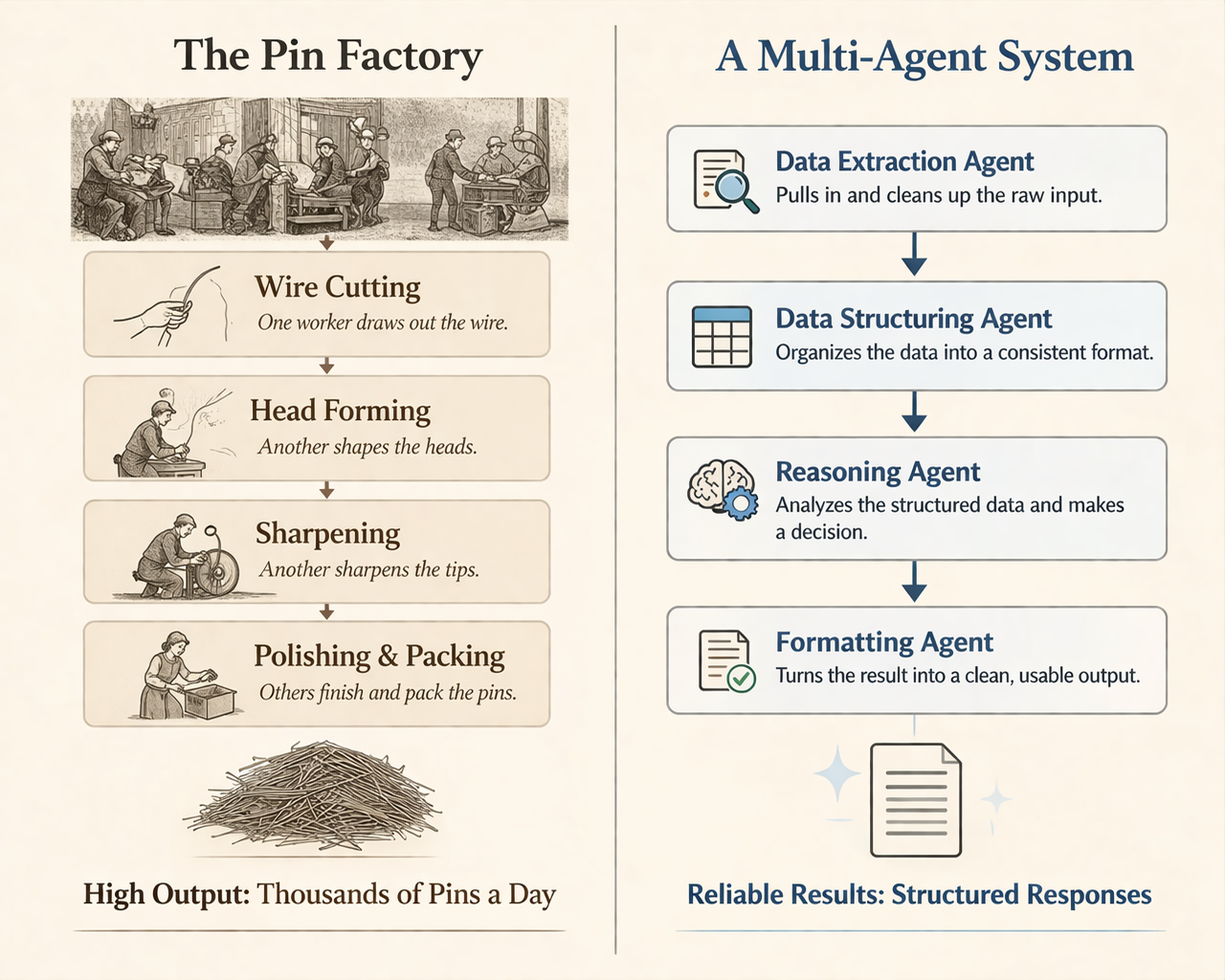

We may be at a similar moment with AI agents. The models can already reason, extract, summarize, decide. But a lot of teams still use them like the village used labor, one general-purpose thing expected to do the whole job from scratch every time.

One Agent Doing Everything

At a previous company in manufacturing finance, we built an underwriting agent for banks. On paper, the job looked simple enough: ingest emails and attachments, pull out the relevant data, normalize it, compare it against the bank’s criteria, then recommend a decision. Approve, deny, or flag for review.

We started with one agent doing all of it. In demos, it looked good. Then we tried to make it hold up across real submissions, messy email threads, inconsistent attachments, slightly different ways of saying the same thing.

That was where it started to drift in subtle ways.

- Extraction would miss a field it had caught the last time because the PDF layout changed.

- Normalization would wobble, the same data point formatted differently depending on what else came in.

- Sometimes the analysis would bleed into the extraction, and the agent would start making judgments before it had finished collecting facts.

Nothing failed loudly, which made it worse. You could not point to one broken part. You just stopped trusting the output.

So we did the usual thing. Tightened the prompt and added rules. Then added exceptions to the rules. Then rules for the exceptions. It would improve for a stretch, then slip somewhere else.

After a while it stopped feeling like engineering. Most of our effort was going into behavior control, not underwriting efficiency.

One Transformation at a Time

What fixed it was shrinking the job.

Instead of one system ingesting an email and producing a decision, we split the work into a sequence of transformations:

- An extraction agent that pulled data from emails and attachments, and did nothing else

- A normalization agent that mapped raw extraction into a consistent schema

- An analysis agent that evaluated the normalized data against the bank’s criteria

- A decision agent that proposed approve, deny, or flag for review based only on the structured analysis

Each step got easier to validate. If extraction missed a field, we caught it before it contaminated the rest of the process. If a new attachment format showed up, we updated one part instead of rewriting a giant prompt.

(If you're curious what I use to build pipelines like this, it’s Mastra.)

By the time the system reached the part that actually required judgment, it was working with something much more predictable.

Once you chain steps together this way, the system stops acting like a single pass and starts acting more like a pipeline. You can route based on outputs. You can reject weak results, loop a step until it passes, or branch the flow entirely. None of that works well when one agent is still carrying too much of the process.

Knowing When to Split

The real shift for us came when we stopped treating it as a pure technology problem and looked at how underwriting already works inside a bank.

Like the pin factory, a human underwriting flow doesn't have one person doing everything. Someone handles document collection, chasing emails, pulling attachments, making sure the file is complete. Someone else extracts the data and gets it into a usable form. An analyst runs the numbers. A reviewer checks the work. Then someone recommends approval or denial.

That structure was not invented for elegance. It probably got there the hard way, through mistakes, missed details, and too many things living in one person’s head at once. Which was more or less what our single-agent system was doing.

So we modeled the agent system after the human one. An extraction agent for pulling data from documents. A normalization agent for getting that data into a stable schema. An analysis agent working from clean inputs. A decision agent making a recommendation from the analysis.

Each agent got simpler. The system got easier to trust. And when something broke, we knew where to look.

That is also why I was interested to see the recent paper From Skills to Talent: Organising Heterogeneous Agents as a Real-World Company. It lands very close to the same conclusion. The moment you stop asking one agent to do the whole job, you start needing the same things a pin factory needed: explicit roles, coordination, handoffs, and operating knowledge that lives in the system instead of in one worker’s head. The paper gives that argument a formal, dynamic multi-agent framework.

The Model Worth Carrying

The moment you find yourself writing a long prompt that tries to do multiple things at once, enforce structure, reason, format, and handle edge cases, you're fighting the same battle Smith's lone pin-maker was fighting.

That tends to be the real design skill in agent systems. Not building the most impressive agent, but noticing when a task has quietly split into several smaller ones.

Those systems often look less magical from the outside, but they just keep working.